Generative AI: Is It Legal?

Powerful AI models are presenting unprecedented challenges to conventional concepts of legal liability and copyright.

Generative AI has people talking. Many questions revolve around whether the robots are coming for your job or endangering the college essay — but one of the most interesting questions raised by the emergence of ChatGPT, Bard, and other models is not ethical or social but legal. (In fact, it's been suggested that the first professional group to benefit from the application of generative AI will be lawyers).

In this article we'll explore the issues — and possible solutions — connected with the widespread availability of these powerful technologies. They fall into two groups:

1) potential liability for the consequences of AI use and

2) infringement of intellectual property (IP) rights by the models themselves.

Potential liability implications for generative AI

As we covered in a recent post, the output of generative AI can sometimes be inaccurate or even inflammatory. From hurling insults and issuing threats to just getting facts wrong, these chatbots can be a bit unruly. For most people, an insulting chatbot is good for a laugh. But, as The Verge reported, BingChat has gone so far as “emotionally manipulating people” and claimed, “it spied on Microsoft’s own developers through the webcams on their laptops.”

An emotionally manipulative chatbot in the wrong hands could have dire consequences — not to mention the privacy implications of an AI capable of spying on people. This is where serious liability concerns arise.

Who is responsible when someone uses generative AI in in a way that results in harm to another person or organization? The answer may not be clear, but companies can take steps to protect themselves.

Brookings suggests that generative AI developers start by making clear in their terms of service that their solutions should not be used in areas where they could have serious personal or financial consequences. Fine for " processes where errors are not especially important (e.g., clothing recommendations)" but not "for impactful socioeconomic decisions, such as educational access, hiring, financial services access, or healthcare."

IP, AI and the law

Even more pressing are concerns about the infringement of IP ownership. In January of 2023, a group of artists filed a lawsuit against AI companies Stability AI Ltd, Midjourney Inc, and DeviantArt Inc for copyright infringement. In the UK, stock photography provider Getty Images sued Stability AI in the U.K. over the alleged copying of millions of its images.

Some argue 'fair use' laws apply, but it seems unlikely; as the Lawfare Institute explains, “In the United States, the fair use doctrine allows the exploitation of a copyrighted work for purposes such as criticism, comment, news reporting, teaching (including multiple copies for classroom use), scholarship, or research.” The January class action filed by artists accuses the companies of downloading or otherwise acquiring “copies of billions of copyrighted images without permission to create Stable Diffusion ...’.” The suit says, “By training Stable Diffusion on the Training Images, Stability caused those images to be stored at and incorporated into Stable Diffusion as compressed copies. Stability made them without the consent of the artists and without compensating any of those artists.”

The first part of the suit addresses the input of images to train the AI, but there is also the output to contend with. “When used to produce images from prompts by its users, Stable Diffusion uses the Training Images to produce seemingly new images through a mathematical software process. These ‘new’ images are based entirely on the Training Images and are derivative works of the particular images Stable Diffusion draws from when assembling a given output. Ultimately, it is merely a complex collage tool.” The lawsuit also addresses the fact that these images compete in the marketplace with the originals.

The applicability of 'fair use' to the mechanisms behind generative AI is not clear even in the U.S. According to Osborne and Clark: "While US companies cite fair use as a defense in ongoing litigation, courts have not yet decided whether this exception applies in the context of AI.” In the EU the situation is even more unfavorable: "European copyright laws do not have a broad and flexible exception such as fair use. It is, therefore, more complicated to find copyright exceptions to justify the use of a third party's copyrighted works in the input or output of an AI system."

It seems the creators of generative AI tools that indiscriminately scrape information from the internet to train their algorithms may have to face some robust litigation. And we haven’t even started to consider the potential legal ramifications for end users — primarily companies — that use a tool like ChatGPT or DALL-E to create content for commercial purposes that potentially infringes on copyright.

Reining it in

So far, the industry’s response to these concerns has been voluntary. The MIT Technology Review reports, “A group of 10 companies, including OpenAI, TikTok, Adobe, the BBC, and the dating app Bumble, have signed up to a new set of guidelines on how to build, create, and share AI-generated content responsibly.”

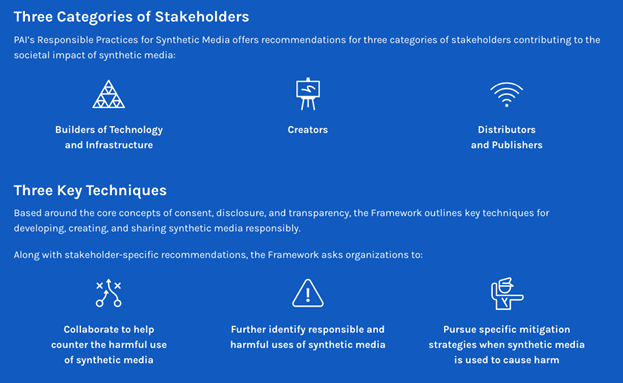

Key to these guidelines is transparency. As MIT puts it, “The recommendations call for both the builders of the technology…and creators and distributors of digitally created synthetic media, such as the BBC and TikTok, to be more transparent about what the technology can and cannot do, and disclose when people might be interacting with this type of content.”

The PAI seeks to guide stakeholders on the ethical use of generative AI and synthetic media.

The three key principles set forth by Partnership on AI (PAI) — consent, disclosure, and transparency — aim to provide guidance on using the tools responsibly. But ultimately, compliance with the framework is voluntary and isn’t likely to be read by most. It also may not address one of the most significant unknown factors in the widespread deployment of generative AI : the users themselves. Unless the developers of AI models severely restrict what their tools are capable of, users will push the boundaries of what's acceptable and legal.

“Generative models can create non-consensual pornography and aid in the process of automating hate speech, targeted harassment, or disinformation,” reports Brookings. “These models have also already started to enable more convincing scams, in one instance helping fraudsters mimic a CEO’s voice in order to obtain a $240,000 wire transfer. Most of these challenges are not new in digital ecosystems, but the proliferation of generative AI is likely to worsen them all.”

It appears responsibility may ultimately fall on regulators to rein in generative AI. As Brookings suggests, “this might include information sharing obligations to reduce commercialization risks, as well as requiring risk management systems to mitigate malicious use.”

However, a European New School of Digital Studies paper suggests there are even better fits for handling generative AI than current regulations — such as deferring responsibility to the “deployers and users” since the original developers are often far removed from the end user.

Wild West for a while

What’s clear is that the rapid pace of development in generative AI technologies is opening up new territory much faster than governments can devise the legislation to regulate it. For the near future, at least, the sector is likely to become bit of a Wild West of contested rights and liabilities until the institutions catch up with the technology.